QoSINUS

QoS INvocation and Usage System

[

Introduction |

Use case example |

Current implementation |

Future works |

Download |

Documents |

Contacts

]

Introduction

Nowadays, many routers used in high-performance core networks

implement QoS fonctionalities. This is true, for example, in VTHD,

which is the core network the e-Toile grid is build on. The routers of

this high-performance network provide differentiated classes services

(DiffServ) for that network's traffic. Four classes are available,

respectively called EF, AF/TCP, AF/UDP, BE. The data packets are

marked in one of those classes, and receive the forwarding treatment

associated to that class at the routers.

Applications need that a certain level of QoS is ensured to the

transmission of their data. But it might not be straightforward for

them to translate their need into a DiffServ class. It would be nice

instead, if they could specify such a level of QoS in a more abstract

manner. For example, they could be given the option to specify a

minimum bandwidth, a maximum loss and/or a maximum delay for their

data streams. They might even be interested in defining their request

using not only quantitative values but also qualitative values, like

"high", "medium" or "low". Say, for example, that an application needs

to transmit an audio stream. This kind of application is not that much

concerned about loss of packets, requires a medium bandwidth, but has

a strong need of low delay of transmission. It could then express it's

QoS need as follow: "delay=low, rate=medium".

The programmable QoS system that we propose for is the glue between

such an application high level QoS request and the backbone routers

DiffServ forwarding treatment. The aim of the architecture is to map a

high level QoS request into a DiffServ class, using monitoring

informations. To provide that functionality, an active service is

running on the router located at ingress of the DiffServ enabled

backbone. It is responsible for monitoring the behavior of each

DiffServ class in the core network, in terms of delay, loss and

rate. Upon reception of a high level QoS request, it uses those

monitoring pieces of information to determine the DiffServ class that

matches the request the best. When the client's data stream is

emitted, it's also up to this active service to mark the data packet

in the chosen class, so that the corresponding DiffServ forwarding

treatment is applied in the core network, and the requested QoS

ensured.

The system described so far provides a mean to request a certain level

of QoS and to actually provide that QoS to a transmission. On top of

that mechanism, a policy has to be enforced, that defines how the QoS

is negociated. The goal here is to control what QoS level might be

granted to which stream. The e-Toile middleware includes a component,

called the allocator, that takes care of job allocation on the

Grid. That component is responsible for chosing the computing elements

on which a job is going to be executed. As such, it is the piece of

software that decides what is the destination of the grid's data

streams. This allocator seems like a good candidate to enforce the QoS

policy (ensure the network ressource allocation), just the same way he

ensures the computing power allocation.

Use case examples in a Grid context

To help understand how the system works and might be used, here are

four uses cases of QoSINUS showing how it could be integrated in a

Grid environment.

-

Use case 0

In this first straighforward case, an application wants to control the

DSCP that is used in its streams packets. QoSINUS includes a override

mechanism that allows bypassing it completly to deal with that case.

-

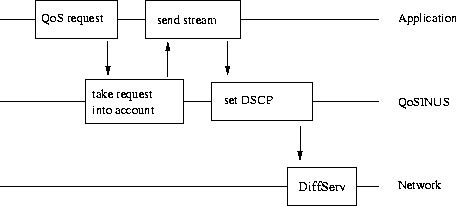

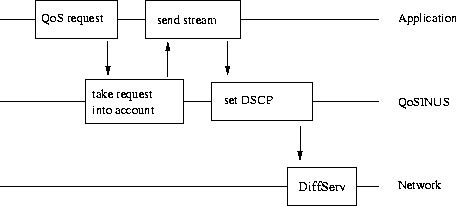

Use case 1

Here, the sender of the data streams is responsible for specifying the

QoS level for its streams to QoSINUS. That's the case of an

application that sends data independly from the Grid

middleware. That's also the case of Grid middleware specific data

streams, like for example, the streams that flow when the Grid user

interface requests the display of the Grid's useage statistics.

The figure below shows this kind of use case.

-

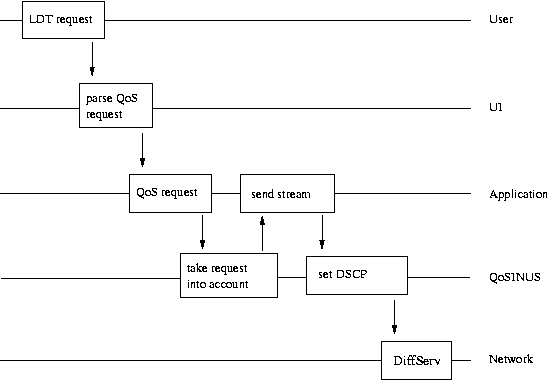

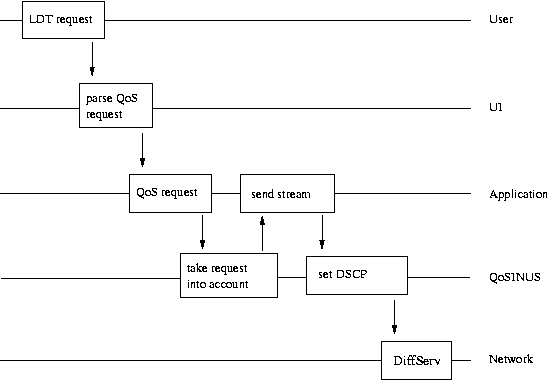

Use case 2

The QoS might also be specified directly by the Grid's user. He might

want, for example, to define the thruput to be assured to the transfer

of the result data of his/her job. In this case, the QoS request is

part of the job submission parameters (expressed in LDT, "langage

d'expression des travaux"). The Grid's UI component is responsible for

extracting this request, as shown below.

-

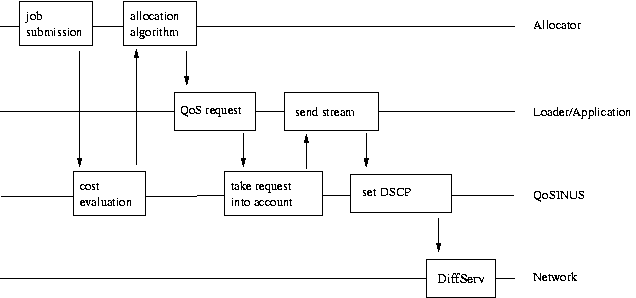

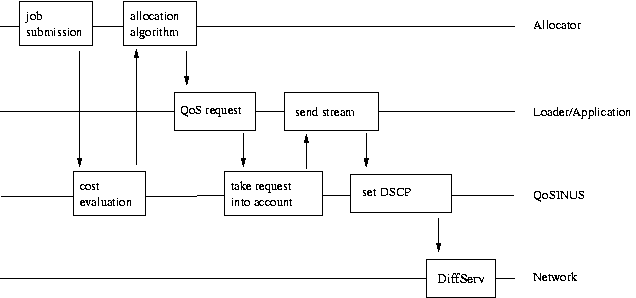

Use case 3

Finally, it might be up to the grid middleware itself to define the

QoS to assure to the data streams circulating in the grid's network.

Here the Grid's allocator, that is responsible for deciding where a

job is going to be executed, sees the network as a ressource just like

computing or storage elements. It takes its allocation decission

taking into account a network transfer cost evaluation. Once the job

is allocated, the Grid's middleware specify the QoS for the streams

generated by the job's loading procedures, as shown below.

Current implementation

Architecture

QoSINUS is an active service that runs in the TAMANOIR active networking execution environment.

It consists in two part: a client API that is used by applications to

request QoS to be ensured to their data stream, and an active service

that runs on TAMANOIR active routers, deployed at the edge of the

DiffServ domains.

Client API

The following functions are available for the client applications to use:

-

QoS_Set:

This function lets an application specify its QoS needs in terms of

delay, rate and loss. The QoS requirements are qualitatives or

quantitatives (high, medium, low) values.

-

QoS_Invoke:

This function is called by the client application to let QoSINUS know

that it is going to start to send its data stream for which QoS was

requested.

-

QoS_Release:

Once it's done sending its data, the application call this function to

let QoSINUS know that it can release the QoS ressource allocated for

its stream.

-

QoS_Request:

This function let the client application specify its QoS needs in a

more detailed way than QoS_Set. The QoS request is expressed in an Xml

format that may include the following pieces of information:

-

the stream ID identifying the stream by mean of its source, its

destination, its protocol or a DSCP.

-

the conformance, describing the rate that the application will not

exceed.

-

the excess treatment describing how to handle (i.e. drop/shape/remark) packets that are not

conform.

-

the guarantees requested, in terms of delay, rate and loss rate.

-

the schedule specifying when and for how long the stream will be sent.

Active service

The QoSINUS active service might be broken into several components, as

shown in the class diagram below. This service is running on the

TAMANOIR active routers located at the DiffServ domain edges.

-

QaSEngine is the heart of the system. It's an in infinite loop handling three kinds of events:

- new QoS request reception

- QoS ressources release command

- and timer pulses used to detect "dead" streams and automatically

release their ressources after a certain time of inactivity (soft

state system allowing for a robustness in the case where the client

stops before releasing its ressources).

-

QaSExecEnvIf is the interface with the TAMANOIR environment with

packets are received and sent.

-

QaSMapper is the algorithm that maps a QoS request to a DiffServ class

to be used in the DiffServ domain. QaSStaticMapper,

QaSAdaptativeMapper and QaSSporadicMapper are different

implementations of that algorithm.

-

QaSStreamStatus is the list of streams that the system handles. For

each stream, it's identifier, the SLS corresponding to the QoS

request, the DiffServ class used, and an activity flag are saved.

-

QaSClassStatus includes pieces of information about the DiffServ

classes to be found in the DiffServ domain. For each class, the total

allocated bandwidth and the available bandwidth are kept up to date.

-

QaSKernelIf intefaces QoSINUS with the Linux kernel's netfilter and tc

modules. Those modules are used to set the DSCP of the packets and

limit the bandwidth used by a stream according to QaSMapper

decissions.

-

QaSMonitoringIf provides QaSMapper with monitoring information for

each DiffServ class in the DiffServ domain.

-

QaSPolicy is a hook allowing a policy management system, that could be

the grid's allocator, to accept or deny a QoS request.

-

QaSXMLIf is used to parse the QoS request expressed as an XML SLS

document.

-

QaSObsIf make it possible to publish status information of the

service. This status can be viewed using a tool like MapCenter for

example.

Mapping algorithm

Two mapping strategies have been implemented so far.

-

Static mapping is a first straigh forward implementation. Here the

bandwidth available at the DiffServ domain ingress point is statically

shared among the DiffServ classes. The mapping between a QoS request

and a DiffServ class is defined by simple static rules, like "short

delay implies Premium DiffServ class".

Upon reception of a QoS request, a DiffServ class is thus

chosen. If enough bandwidth is still available for that class, the QoS

request is accepted, and the chosen class's available bandwidth

is updated. Otherwise the Qos request is rejected.

-

The dynamic mapping adopts another approach. Here, the DiffServ

classes performance (in terms of round trip time, loss rate, and

possibly available bandwidth) are measured dynamically. Based on that

information the system periodically chooses the best DiffServ class to

use for each stream and can thus adapt to performance variations of

the DiffServ classes in the core network.

Download

Contacts