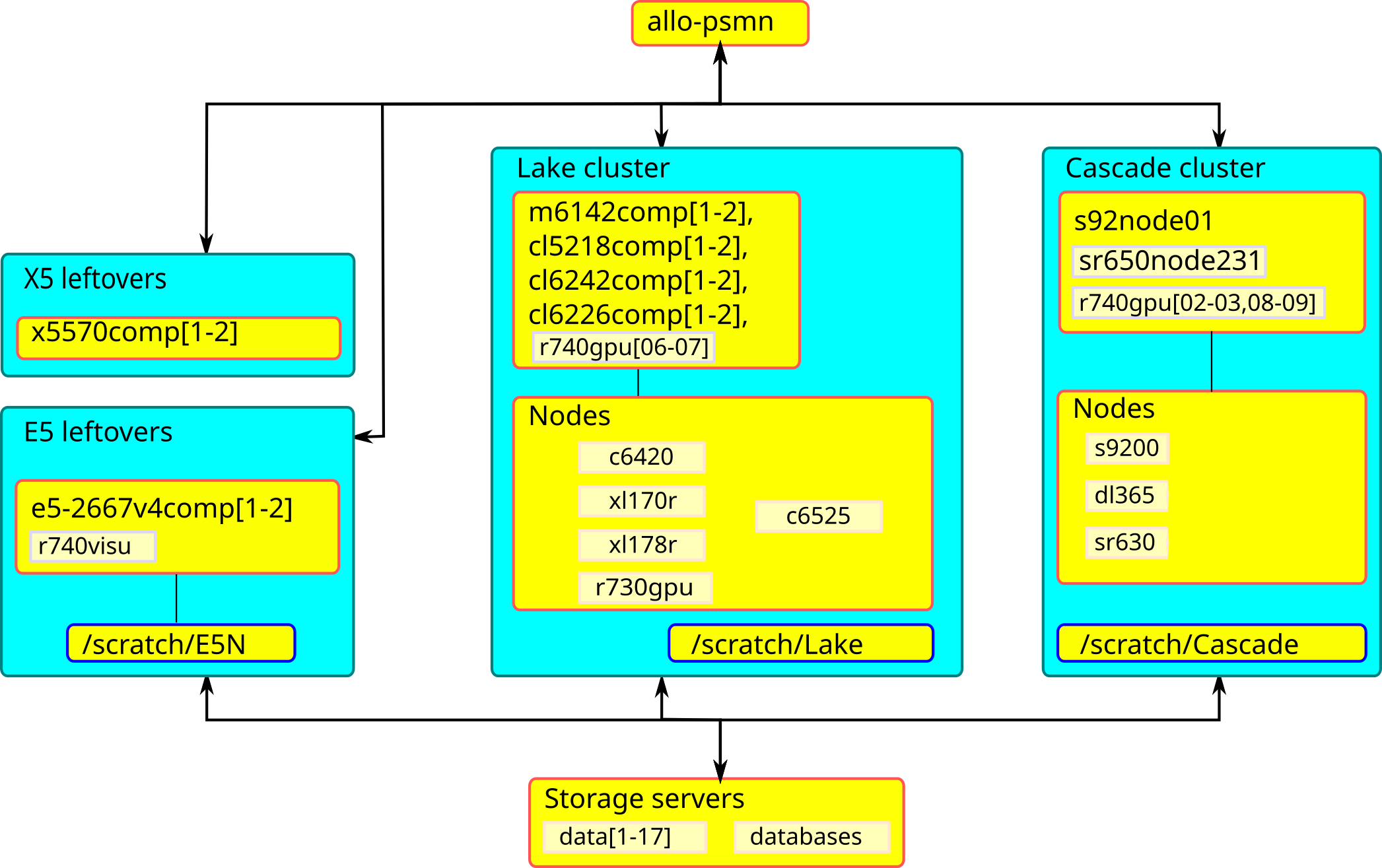

Clusters/Partitions overview

Fig. 35 A view of PSMN clusters

Note

Clusters are the hardware assembly (including dedicated network, etc). Partitions are logical divisions within clusters.

Cluster E5

Warning

*E5 End Of Life, cluster has been powered off at end of April 2025.

Cluster Lake

Lake scratchsare available, from all nodes of the partition, on the following paths:

/scratch/

├── Bio

├── Chimie

├── Lake (general purpose scratch)

└── Themiss

‘Bio’, ‘Chimie’ scratchs are meant for users of respective labs and teams,

‘Themiss’ scratch is reserved to Themiss ERC users,

‘Lake’ is for all users (general purpose scratch),

Nodes

c6420node[049-060],r740bigmem201have a local scratch on/scratch/disk/(120 days lifetime residency,--constraint=local_scratch),Choose ‘Lake’ environment modules (See Modular Environment). See Using X2Go for data visualization for visualization servers informations,

Partition E5-GPU

Hardware specifications per node:

login nodes |

CPU Model |

cores |

RAM |

ratio |

infiniband |

GPU |

local scratch |

|---|---|---|---|---|---|---|---|

r730gpu01 |

E5-2637v3 @ 3.5GHz |

8 |

128 GiB |

16 GiB/core |

56 GiB/s |

2x RTX2080Ti |

/scratch/Lake |

This partition is open to everyone, 8 days walltime (and yes, it is in Lake cluster).

Hint

Best use case: GPU jobs, training, testing, sequential jobs, small parallel jobs (<32c)

Choose ‘E5’ environment modules (See Modular Environment),

Partition E5-GPU has access to

Lake scratchs, and has no access to/scratch/E5N(see Cluster Lake above),

Partition Lake-short

Hardware specifications per node:

login nodes |

CPU Model |

cores |

RAM |

ratio |

infiniband |

GPU |

local scratch |

|---|---|---|---|---|---|---|---|

m6142comp[1-2] |

Gold 5118 @ 2.3GHz |

24 |

96 GiB |

4 GiB/core |

56 GiB/s |

N/A |

This partition is open to everyone, 4 hours walltime.

Hint

Best use case: tests, short sequential jobs, short and small parallel jobs (<96c)

Partition Lake

Hardware specifications per node:

login nodes |

CPU Model |

cores |

RAM |

ratio |

infiniband |

GPU |

local scratch |

|---|---|---|---|---|---|---|---|

m6142comp[1-2] |

Gold 6142 @ 2.6GHz |

32 |

384 GiB |

12 GiB/core |

56 GiB/s |

N/A |

|

Gold 6142 @ 2.6GHz |

32 |

384 GiB |

12 GiB/core |

56 GiB/s |

N/A |

/scratch/disk/ |

|

cl6242comp[1-2] |

Gold 6242 @ 2.8GHz |

32 |

384 GiB |

12 GiB/core |

56 GiB/s |

N/A |

|

cl5218comp[1-2] |

Gold 5218 @ 2.3GHz |

32 |

192 GiB |

6 GiB/core |

56 GiB/s |

N/A |

|

cl6226comp[1-2] |

Gold 6226R @ 2.9GHz |

32 |

192 GiB |

6 GiB/core |

56 GiB/s |

N/A |

This partition is open to everyone, 8 days walltime.

Hint

Best use case: medium parallel jobs (<384c)

Partition Lake-bigmem

Note

This partition is subject to authorization, 8 days walltime, open a ticket to justify access.

Hint

Best use case: large memory jobs (<32c, 32c max), sequential jobs

Hardware specifications per node:

login nodes |

CPU Model |

cores |

RAM |

ratio |

infiniband |

GPU |

local scratch |

|---|---|---|---|---|---|---|---|

none |

Gold 6226R @ 2.9GHz |

32 |

1,5 TiB |

46 GiB/core |

56 GiB/s |

N/A |

/scratch/disk/ |

Lake scratchsare available (see Cluster Lake above), and a local scratch on/scratch/disk/(120 days lifetime residency,--constraint=local_scratch),

Partition Epyc

Hardware specifications per node:

login nodes |

CPU Model |

cores |

RAM |

ratio |

infiniband |

GPU |

local scratch |

|---|---|---|---|---|---|---|---|

none |

EPYC 7702 @ 2.0GHz |

128 |

512 GiB |

4 GiB/core |

100 GiB/s |

N/A |

None |

This partition is open to everyone, 8 days walltime.

Hint

Best use case: large parallel jobs (>256c)

There is no login node available in the Epyc partition at the moment. Use an Interactive session for builds/tests,

Epyc partition has access to

Lake scratchs(see Cluster Lake above),There is no specific environment modules (use ‘E5’ environment modules),

8 days walltime.

Cluster Cascade

Cascade scratch(general purpose scratch) is available, from all nodes of the partition, on the following path:

/scratch/

├── Cascade (general purpose scratch)

└── Cral

‘Cral’ scratch is meant for CRAL users and CRAL teams,

Choose ‘Cascade’ environment modules (See Modular Environment),

Partition Cascade

Hardware specifications per node:

login nodes |

CPU Model |

cores |

RAM |

ratio |

infiniband |

GPU |

local scratch |

|---|---|---|---|---|---|---|---|

s92node01 |

Platinum 9242 @ 2.3GHz |

96 |

384 GiB |

4 GiB/core |

100 GiB/s |

N/A |

None |

This partition is open to everyone, 8 days walltime.

Hint

Best use case: sequential jobs, large parallel jobs (>256c)

Partition Cascade-GPU

Hardware specifications per node:

login nodes |

CPU Model |

cores |

RAM |

ratio |

infiniband |

GPU |

local scratch |

|---|---|---|---|---|---|---|---|

none |

Platinum 9242 @ 2.3GHz |

96 |

384 GiB |

4 GiB/core |

100 GiB/s |

1x L4 |

None |

Same as partition Cascade above, this partition is open to everyone, 8 days walltime.

Hint

Best use case: GPU jobs, training, testing, sequential jobs, large parallel jobs (>256c)

Partition Emerald-bigmem

login nodes |

CPU Model |

cores |

RAM |

ratio |

infiniband |

GPU |

local scratch |

|---|---|---|---|---|---|---|---|

none |

Platinum 8592+ @ 1.9GHz |

128 |

1024 GiB |

8 GiB/core |

100 GiB/s |

N/A |

None |

Hint

Best use case: large memory jobs (~128c), sequential jobs

Note

This partition is subject to authorization, open a ticket to justify access.